MOI Integration with DeerFlow for RAG Application Development Guide

Overview

This guide provides detailed instructions on how to integrate the open-source RAG application development engine DeerFlow with MatrixOne Intelligence (MOI)'s RAG service to build powerful deep retrieval-augmented generation applications.

What is DeerFlow?

DeerFlow is an open-source RAG application development engine from ByteDance, designed to simplify the construction of retrieval-augmented generation applications. It has the following core features:

- End-to-end Support: Provides full-chain RAG capabilities from document parsing, text segmentation, vector embedding to retrieval generation

- Out-of-the-box: Pre-configured with multiple data source parsers and segmentation strategies, supporting rapid RAG application setup

- Flexible Extension: Supports custom tools and plugins for business customization

- Multi-modal Support: Supports not only text but also images, PPTs, and other content formats

- Visual Interface: Provides Web UI for easy use and management by non-technical users

By standardizing RAG workflows, DeerFlow enables developers to focus on business logic rather than underlying technical implementations, significantly improving RAG application development efficiency.

Environment Preparation

System Requirements

- Python: 3.12 or higher

- Node.js: 22 or higher

DeerFlow Installation and Deployment

For detailed reference: https://github.com/bytedance/deer-flow

# Step 1: Clone the repository

git clone https://github.com/bytedance/deer-flow.git

cd deer-flow

# Step 2: Install Python dependencies

uv sync

# Step 3: Initialize configuration files

cp .env.example .env

cp conf.yaml.example conf.yaml

# Step 4: Install PPT generation support (optional)

# On macOS using Homebrew

brew install marp-cli

# Step 5: Install Web UI dependencies (optional)

cd web

pnpm install

MOI RAG Workflow Configuration

Create Workflow

For specific creation steps, refer to the article Workflow

A basic RAG workflow must include parsing nodes, segmentation nodes, and embedding nodes.

Obtain API Credentials

- Find API information in the bottom left corner of the MOI workspace

- Copy the API Key

- Record the API URL, format like:

https://freetier-01.cn-hangzhou.cluster.matrixonecloud.cn - DeerFlow access point is:

{API_URL}

DeerFlow Configuration for MOI Integration

Configure Environment Variables

Edit the .env file in the project root directory:

# MOI is a hybrid database that mainly serves enterprise users (https://www.matrixorigin.io/matrixone-intelligence)

RAG_PROVIDER=moi

MOI_API_URL="https://freetier-01.cn-hangzhou.cluster.matrixonecloud.cn"

MOI_API_KEY="moi-key-xxxxxxxxxxxx"

MOI_RETRIEVAL_SIZE=10

MOI_LIST_LIMIT=10

Configure Base Language Model

Edit the conf.yaml file to configure the LLM model:

BASIC_MODEL:

# Model service API address (supports local deployments like Ollama)

base_url: http://localhost:11434/v1

# Model name (must support tool calling functionality)

model: "qwen2.5:7b"

# API key (if required)

api_key: xxxxxx

Important Note: The selected base model must support Tool Calling functionality, which is crucial for proper RAG application operation.

Launch Application

After completing the configuration, you can launch the DeerFlow application:

# Execute in the project root directory

uv run main.py

If Web UI is configured, you also need to start the frontend service:

# Execute in the project root directory

# On macOS/Linux

./bootstrap.sh -d

# On Windows

bootstrap.bat -d

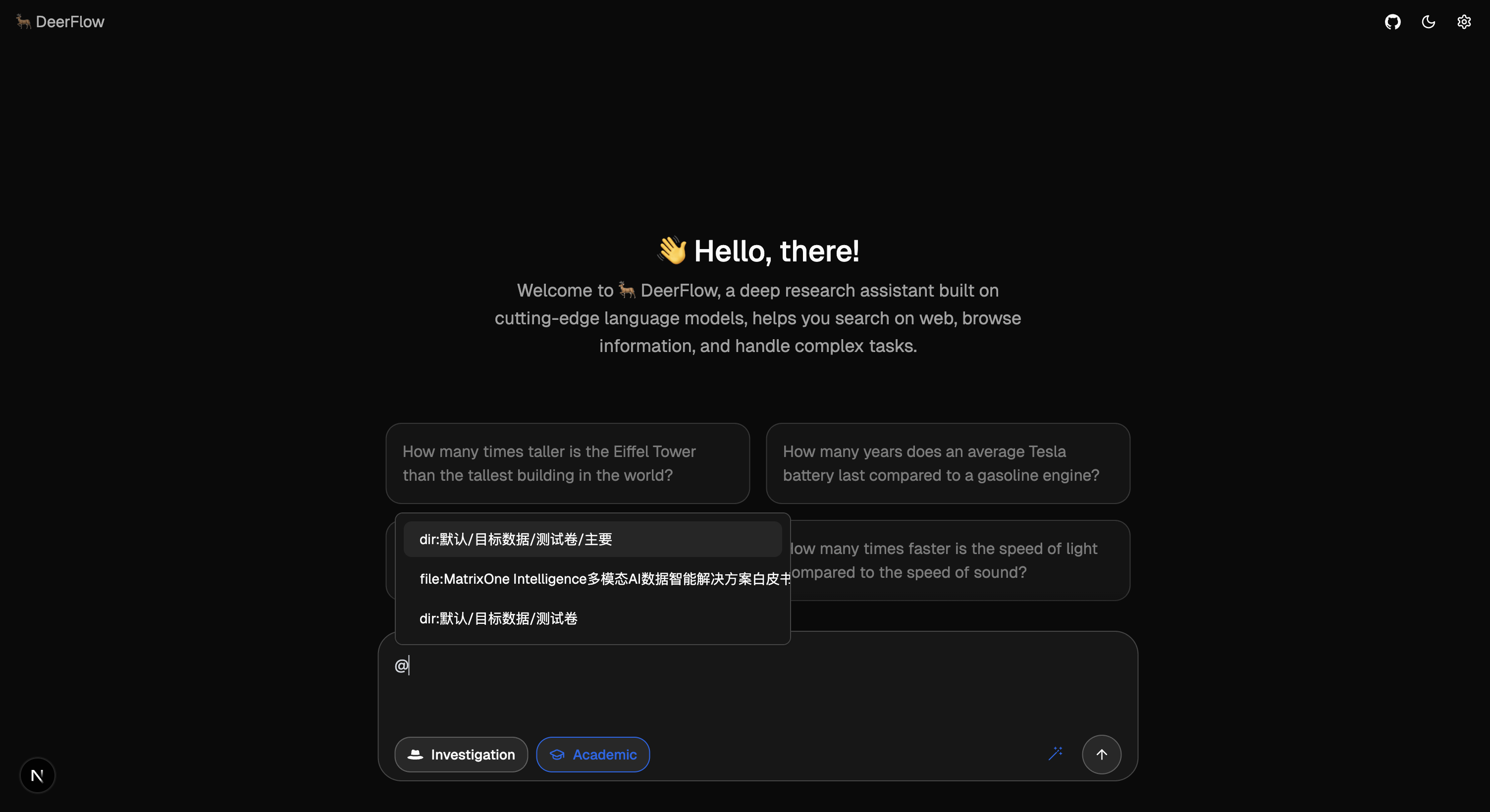

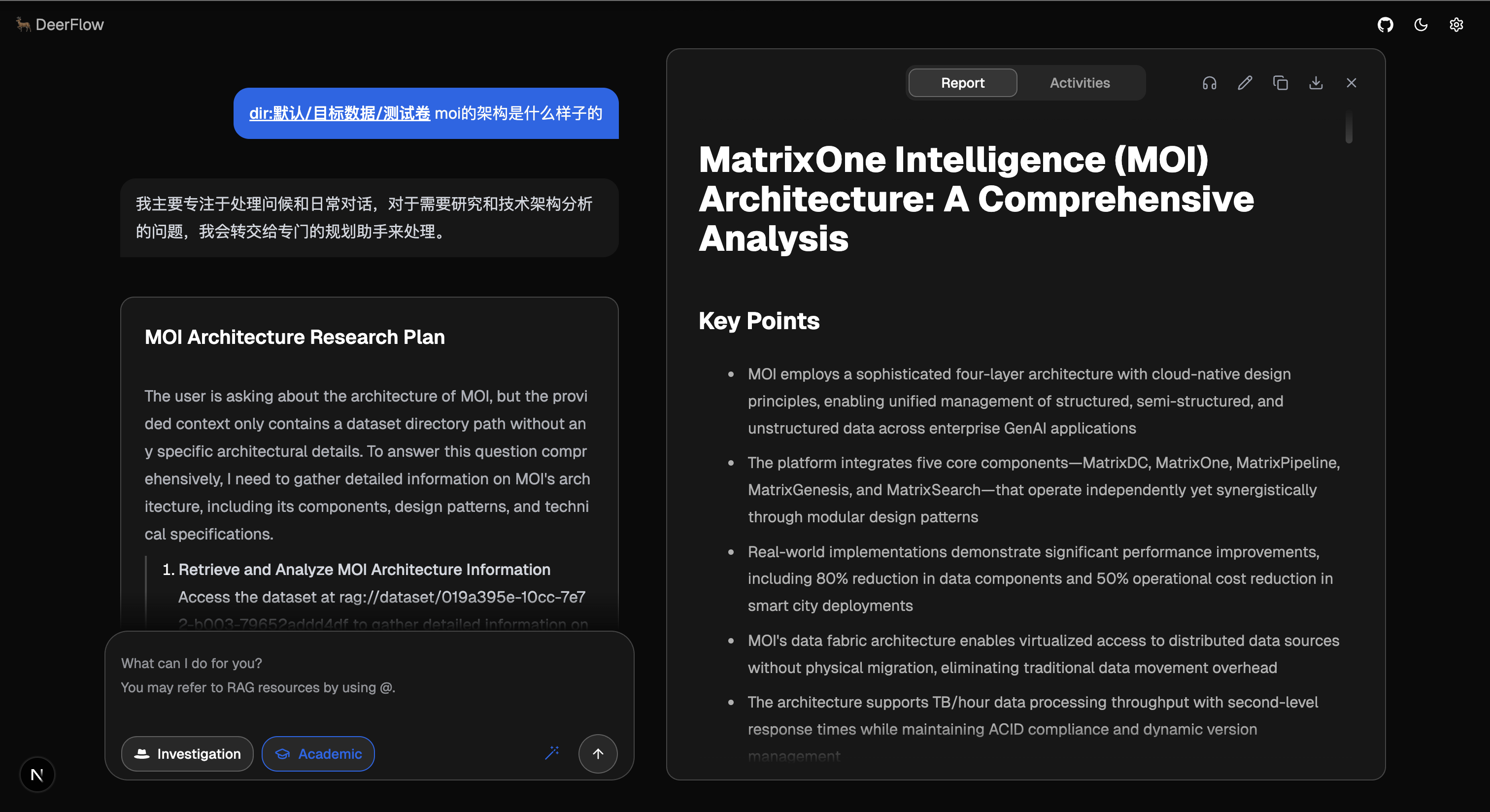

Click Get Started to enter the conversation page, and type the @ symbol in the dialog box to access files processed on MOI. Currently, only the first ten files are returned, and you can enter file names in the input box for matching.

Note

Currently, all processed files are retrieved and displayed. You need to manually select files that have been processed by text embedding nodes.

Through the above steps, you can successfully integrate DeerFlow with MOI RAG service to build fully functional retrieval-augmented generation applications.